Jan 30 2012

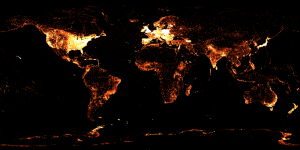

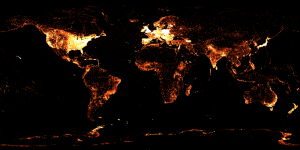

Check out the Wikipedia Map Interface developed by RENDER! The map is generated out of all geotagged articles in a dozen different language Wikipedias. The dynamic interface allows to zoom into the map and check out the individual articles. The different languages display a strong bias over the different languages.

Check out the Wikipedia Map Interface developed by RENDER! The map is generated out of all geotagged articles in a dozen different language Wikipedias. The dynamic interface allows to zoom into the map and check out the individual articles. The different languages display a strong bias over the different languages.

Find the Wikipedia Map Interface here: http://km.aifb.kit.edu/sites/wikipediamap/

Nov 04 2011

RENDER was mentioned in the news of two major German Web sites.

Heise online and taz.de reported about Wikimedia’s plan to build a centralized database that will be shared over all language versions of Wikipedia where the RENDER project is involved.

Read full article on heise online and taz.de

Aug 02 2011

The Economist’s article about the dangers of the internet goes into detail about the so called “filter bubble”, a unique universe of information for each single person, that we can experience everyday on Google, Amazon, Facebook and Co. Our location, interests, previous surf behaviors etc. are taking into account from these sites and we are presented with a personalized result. That sounds good, but is this all we want to have?

Eli Pariser and other critics think this is dangerous and believe that this approach prevents us from seeing and using the full potential of the Internet, not being presented with information that doesn’t fit into our own universe of opinions and interests. Eli Pariser calls this “invisible autopropaganda, indoctrinating us with our own ideas”. In his book “The filter bubble: what the Internet is hiding from you” he goes into detail how such a filtered Internet can be dangerous and how sites can give users more control over their personal data.

Jul 20 2011

Fabian Flöck gave an interesting talk on the topic “Towards a diversity-minded Wikipedia” at the ACM 3rd International Conference on Web Science in Koblenz, Germany. You can find his talk on VideoLectures.net as well as in our presentations section. The corresponding paper can be downloaded here.

Towards a diversity-minded Wikipedia

Watch this on VideoLectures.net

Jun 27 2011

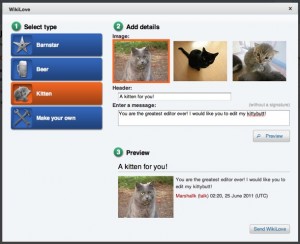

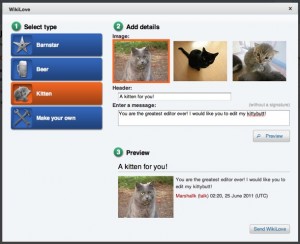

Wikipedia is introducing the WikiLove Button. It is already live on the Wikipedia test site and it will be available site-wide on Wednesday June 29th. It appears in the right hand corner of each user’s page, in the form of a little red heart. Users are encouraged to click the button when they come across edits or other on-site activity that deserves commendation.

Wikipedia is introducing the WikiLove Button. It is already live on the Wikipedia test site and it will be available site-wide on Wednesday June 29th. It appears in the right hand corner of each user’s page, in the form of a little red heart. Users are encouraged to click the button when they come across edits or other on-site activity that deserves commendation.

A click of the button will result in the launch of a Love Interface, in which the user is presented a number of options for what kind of love to send and images to append to a free-text compliment area. The resulting declaration of support is published to the receiving user’s account discussion page and is monitored by top Wikipedia users to prevent misuse.

Check out the Wikipedia Map Interface developed by RENDER! The map is generated out of all geotagged articles in a dozen different language Wikipedias. The dynamic interface allows to zoom into the map and check out the individual articles. The different languages display a strong bias over the different languages.

Check out the Wikipedia Map Interface developed by RENDER! The map is generated out of all geotagged articles in a dozen different language Wikipedias. The dynamic interface allows to zoom into the map and check out the individual articles. The different languages display a strong bias over the different languages.

Subscribe to RENDER news

Subscribe to RENDER news Follow us on Twitter

Follow us on Twitter  RENDER on Facebook

RENDER on Facebook

learn more

learn more